Designing a target cloud architecture is a multifaceted process, critical for modern businesses seeking agility, scalability, and cost-efficiency. This undertaking transcends simple infrastructure deployment; it necessitates a strategic alignment of business objectives, technical requirements, and cloud provider capabilities. The following discussion will dissect the key components of cloud architecture design, providing a structured approach to navigating the complexities of cloud migration and optimization.

We will explore essential elements, including defining requirements, selecting cloud providers, designing compute and storage layers, establishing networking and security, implementing automation, and optimizing costs. This exploration will provide a foundational understanding, enabling informed decisions and efficient cloud resource utilization.

Defining the Target Cloud Architecture

The definition of a target cloud architecture is a crucial step in any cloud migration or new cloud-native initiative. This phase translates business needs into a concrete technical blueprint. It ensures that the cloud environment aligns with the organization’s objectives, providing a foundation for scalability, security, and cost-effectiveness. This involves carefully considering business goals and defining the non-functional requirements that will guide the architectural design.

Business Goals Supported by the Cloud Architecture

The cloud architecture must directly support the strategic objectives of the business. These goals dictate the functionality and performance characteristics required of the cloud infrastructure. The following are examples of business goals and how the cloud architecture can support them:

- Increase Market Agility: Cloud environments enable faster development cycles and deployment. By leveraging services like containerization (e.g., Docker, Kubernetes) and Infrastructure as Code (IaC), organizations can rapidly provision resources and adapt to changing market demands. For example, a retail company can quickly scale its e-commerce platform during peak shopping seasons without over-provisioning resources year-round.

- Reduce Operational Costs: Cloud providers offer various pricing models, including pay-as-you-go, which can reduce capital expenditure (CAPEX) and operational expenditure (OPEX). Automating infrastructure management and optimizing resource utilization are key. An example is migrating an on-premises data center to the cloud, reducing the need for physical hardware maintenance, power consumption, and real estate costs.

- Improve Customer Experience: Cloud platforms can improve application performance and availability. Content Delivery Networks (CDNs) and global server deployments can reduce latency for users worldwide. A streaming service, for instance, can utilize CDNs to deliver video content to users across the globe with minimal buffering and high quality.

- Enhance Data-Driven Decision Making: Cloud-based data analytics services provide powerful tools for processing and analyzing large datasets. Machine learning and artificial intelligence services can be integrated to gain valuable insights. A financial institution could use cloud-based analytics to detect fraudulent transactions in real-time, improving security and customer trust.

- Strengthen Security and Compliance: Cloud providers offer robust security features and compliance certifications (e.g., SOC 2, HIPAA). These services provide a more secure infrastructure than many organizations can build and maintain on their own. A healthcare provider can leverage cloud services to ensure compliance with HIPAA regulations, protecting patient data.

Non-Functional Requirements and Their Importance

Non-functional requirements define the quality attributes of a system, such as performance, security, and scalability. Defining these requirements and assigning importance levels is crucial for guiding the architecture design. The importance level is usually determined by business priorities, potential risks, and regulatory requirements. Here’s a breakdown of common non-functional requirements and their importance levels:

- Performance: This refers to the responsiveness and efficiency of the system. It encompasses metrics like latency, throughput, and resource utilization.

- Importance: High. A poorly performing application can lead to a poor user experience and lost revenue.

- Example: An e-commerce website must handle a high volume of transactions during peak hours with minimal latency.

- Scalability: The ability of the system to handle increased workloads without performance degradation.

- Importance: High. Cloud environments are designed to scale, so the architecture should leverage this capability.

- Example: A social media platform needs to scale its infrastructure to accommodate a growing user base.

- Security: The protection of data and resources from unauthorized access, use, disclosure, disruption, modification, or destruction.

- Importance: Critical. Security breaches can lead to significant financial and reputational damage.

- Example: A banking application must protect sensitive financial data from cyberattacks.

- Availability: The percentage of time the system is operational and accessible to users.

- Importance: High. High availability ensures business continuity and minimizes downtime.

- Example: A critical business application should have a high availability (e.g., 99.99% uptime) to avoid disruptions.

- Reliability: The ability of the system to perform its intended functions correctly and consistently.

- Importance: Medium to High. Reliable systems reduce the risk of data loss and system failures.

- Example: A backup and disaster recovery strategy ensures business continuity in the event of an outage.

- Cost Optimization: The efficient use of cloud resources to minimize spending.

- Importance: High. Cloud costs can quickly escalate if not managed properly.

- Example: Implementing resource optimization strategies, such as right-sizing instances and using reserved instances, can significantly reduce cloud costs.

- Maintainability: The ease with which the system can be modified, updated, and repaired.

- Importance: Medium. A maintainable system reduces the cost and effort of making changes.

- Example: Using modular architecture and IaC simplifies the process of updating and maintaining the system.

- Compliance: Adherence to regulatory and industry standards.

- Importance: Critical (depending on industry). Failure to comply can result in legal penalties.

- Example: Healthcare organizations must comply with HIPAA regulations, which require strict data protection measures.

Common Architectural Patterns for Target Cloud Environments

Selecting appropriate architectural patterns is crucial for achieving the desired non-functional requirements and aligning with business goals. The choice of patterns depends on the specific application, the cloud provider, and the desired level of agility and scalability. Here are examples of common architectural patterns:

- Microservices: A software development approach that structures an application as a collection of small, independently deployable services.

- Applicability: Ideal for complex applications that need to be highly scalable and maintainable.

- Benefits: Enables independent scaling of individual services, faster development cycles, and improved fault isolation.

- Example: An e-commerce platform might have separate microservices for product catalog, shopping cart, order processing, and payment gateway.

- Serverless: A cloud computing execution model where the cloud provider dynamically manages the allocation of machine resources.

- Applicability: Well-suited for event-driven applications, APIs, and workloads with variable traffic.

- Benefits: Reduces operational overhead, allows for automatic scaling, and offers a pay-per-use pricing model.

- Example: A photo-sharing application can use serverless functions to automatically resize images upon upload.

- Event-Driven Architecture: A design pattern where components of an application communicate asynchronously through events.

- Applicability: Suitable for systems that need to react to real-time events, such as user actions or system updates.

- Benefits: Improves scalability, loose coupling between components, and enables real-time data processing.

- Example: A ride-sharing service might use an event-driven architecture to notify drivers of new ride requests.

- Containerization: Packaging an application and its dependencies into a self-contained unit (container) that can be easily deployed across different environments.

- Applicability: Facilitates portability, consistency, and efficient resource utilization.

- Benefits: Simplifies application deployment, improves resource utilization, and promotes faster development cycles.

- Example: Deploying a web application inside a container to ensure consistent behavior across different cloud environments.

- API Gateway: A service that acts as a single entry point for all API requests.

- Applicability: Simplifies API management, security, and routing.

- Benefits: Provides centralized authentication, authorization, rate limiting, and traffic management.

- Example: An API gateway can manage and secure APIs for a mobile application, handling all incoming requests and routing them to the appropriate backend services.

Cloud Provider Selection

Selecting the appropriate cloud provider is a pivotal decision in designing a target cloud architecture. This choice significantly impacts the overall cost, performance, security, and scalability of the system. A careful evaluation of available options, based on specific requirements and business objectives, is essential for achieving optimal results.

Criteria for Selecting a Cloud Provider

The selection process necessitates a thorough assessment of several critical criteria. These factors, when weighed against organizational needs, guide the decision-making process and ensure alignment with strategic goals.

- Cost: Cloud pricing models vary significantly. Understanding the different pricing structures (pay-as-you-go, reserved instances, spot instances) and comparing costs across providers is crucial. Total cost of ownership (TCO) analysis should include not just compute and storage but also data transfer, support, and any associated service charges.

- Features and Services: The breadth and depth of services offered are a key differentiator. Consider the availability of services like compute, storage, databases, networking, machine learning, and serverless computing. Evaluate the maturity and features of each service to ensure they meet specific application requirements.

- Geographical Reach and Availability Zones: The geographic distribution of data centers impacts latency, data residency requirements, and disaster recovery capabilities. Consider the provider’s global presence, the number of availability zones within each region, and the ability to replicate data across regions.

- Performance: Performance characteristics such as compute power, network bandwidth, and storage I/O are critical. Benchmarking and testing applications on different providers can help determine which platform delivers the required performance levels.

- Security and Compliance: Security is paramount. Evaluate the provider’s security features, compliance certifications (e.g., SOC 2, HIPAA, GDPR), and security practices. Assess the provider’s support for identity and access management (IAM), encryption, and threat detection.

- Support and Service Level Agreements (SLAs): Reliable support and well-defined SLAs are essential. Examine the provider’s support offerings, response times, and guaranteed uptime percentages. The SLAs should align with the application’s availability and performance requirements.

- Vendor Lock-in: Consider the potential for vendor lock-in. Evaluate the ease of migrating applications and data to other platforms if necessary. Look for providers that offer open standards, interoperability, and tools that facilitate portability.

Comparison of Cloud Providers

The major cloud providers – Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP) – each offer a comprehensive suite of services. Comparing their strengths and weaknesses requires a detailed analysis of their offerings and capabilities.

- Amazon Web Services (AWS): AWS is the market leader, boasting the largest market share and the broadest range of services. Its strengths include:

- Mature and Extensive Service Portfolio: AWS offers a vast array of services, covering virtually every aspect of cloud computing.

- Global Infrastructure: AWS has the largest global footprint, with numerous regions and availability zones.

- Strong Community and Ecosystem: AWS benefits from a large community, extensive documentation, and a rich ecosystem of third-party tools and services.

Weaknesses include:

- Complexity: The sheer number of services can be overwhelming for new users.

- Pricing Complexity: AWS pricing can be complex, making it difficult to predict costs.

- Microsoft Azure: Azure is the second-largest cloud provider and is particularly strong in hybrid cloud scenarios. Its strengths include:

- Hybrid Cloud Capabilities: Azure excels in hybrid cloud deployments, offering seamless integration with on-premises infrastructure and services.

- Strong Integration with Microsoft Products: Azure integrates well with Microsoft products like Windows Server, Active Directory, and .NET.

- Enterprise Focus: Azure is often favored by enterprise customers due to its robust security features and compliance certifications.

Weaknesses include:

- Smaller Market Share: Compared to AWS, Azure has a smaller market share.

- Service Maturity: Some Azure services may not be as mature as those offered by AWS.

- Google Cloud Platform (GCP): GCP is known for its innovation in data analytics, machine learning, and containerization. Its strengths include:

- Innovation in Data Analytics and Machine Learning: GCP offers cutting-edge services in data analytics, machine learning, and artificial intelligence.

- Competitive Pricing: GCP often offers competitive pricing, particularly for sustained use and committed use discounts.

- Strong Containerization Capabilities: GCP is a leader in containerization with its Kubernetes service (GKE).

Weaknesses include:

- Smaller Market Share: GCP has a smaller market share compared to AWS and Azure.

- Service Availability: Service availability in some regions may be limited.

Provider Service Comparison Table

The following table provides a simplified comparison of compute, storage, and networking services offered by AWS, Azure, and GCP. Note that the offerings are continuously evolving, and this table represents a snapshot in time.

| Service | AWS | Azure | GCP |

|---|---|---|---|

| Compute |

|

|

|

| Storage |

|

|

|

| Networking |

|

|

|

Designing the Compute Layer

The compute layer forms the operational heart of a cloud architecture, providing the processing power necessary to run applications. This section delves into the critical decisions involved in selecting the optimal compute resources, specifically focusing on virtual machines (VMs), containers, and serverless functions. The choice between these approaches hinges on factors such as application requirements, operational overhead, and cost considerations.

Understanding the trade-offs inherent in each option is paramount for designing a scalable, efficient, and cost-effective cloud solution.

Choosing Between Virtual Machines, Containers, and Serverless Functions

Selecting the appropriate compute model—VMs, containers, or serverless functions—is a pivotal architectural decision. Each offers distinct advantages and disadvantages, making the choice dependent on the application’s specific needs.

- Virtual Machines (VMs): VMs provide a high degree of control and isolation. They encapsulate an entire operating system, allowing for the execution of diverse applications with varying dependencies. This approach offers maximum flexibility, as it supports legacy applications and those requiring extensive customization. However, VMs incur significant overhead, as each VM requires a full operating system, leading to increased resource consumption and slower startup times.

The operational burden is also substantial, involving OS patching, security updates, and resource management. For example, a company migrating a large on-premises infrastructure to the cloud might choose VMs initially to maintain compatibility and control, even if it means higher operational costs in the short term.

- Containers: Containers offer a lightweight and portable alternative to VMs. They package an application and its dependencies into a self-contained unit, allowing for consistent execution across different environments. Containers share the host operating system’s kernel, resulting in faster startup times and reduced resource consumption compared to VMs. This makes them ideal for microservices architectures and applications that require rapid scaling and deployment.

Container orchestration platforms, such as Kubernetes, automate the deployment, scaling, and management of containerized applications. A retail company, for instance, could containerize its e-commerce platform, enabling quick deployments of new features and efficient scaling during peak shopping seasons.

- Serverless Functions: Serverless computing abstracts away the underlying infrastructure, allowing developers to focus solely on writing and deploying code. Functions are triggered by events, such as HTTP requests or database updates, and automatically scale based on demand. This model eliminates the need to manage servers, leading to reduced operational overhead and cost savings. Serverless is particularly well-suited for event-driven applications, APIs, and background processing tasks.

A social media platform might utilize serverless functions for processing image uploads, triggering notifications, or handling user authentication. The primary drawback is the limited control over the underlying infrastructure and the potential for vendor lock-in.

Designing a Containerized Application Deployment with Kubernetes

Kubernetes (K8s) is a powerful container orchestration platform that automates the deployment, scaling, and management of containerized applications. A well-designed Kubernetes deployment ensures high availability, scalability, and efficient resource utilization.

The following diagram illustrates a containerized application deployment with Kubernetes:

Diagram Description:

The diagram depicts a Kubernetes cluster comprising several nodes (virtual machines or physical servers). Each node runs a container runtime, such as Docker or containerd. The application is deployed as a set of pods. A pod is the smallest deployable unit in Kubernetes and can contain one or more containers. A service, defined in Kubernetes, provides a stable IP address and DNS name for accessing the pods.

The diagram includes the following components and their interactions:

- Users/Clients: Representing external users or services that interact with the application.

- Load Balancer: Directs traffic to the service, distributing it across the pods.

- Service: Provides a stable endpoint for accessing the application pods. The service uses labels and selectors to identify the pods it manages.

- Pods: Each pod contains one or more containers that run the application code. The pods are managed by Kubernetes, ensuring they are running and available.

- Containers: The actual application code and its dependencies are packaged within containers.

- Nodes: The underlying infrastructure (VMs or physical servers) that host the pods.

- Kubernetes Control Plane: Manages the cluster, including scheduling pods, scaling deployments, and monitoring the health of the cluster.

- Data Storage: Represents persistent storage, such as databases or object storage, used by the application.

The workflow can be described as follows:

- Users/clients send requests to the application through the load balancer.

- The load balancer distributes the traffic to the service.

- The service forwards the traffic to the appropriate pods based on labels and selectors.

- The pods, which contain the application containers, process the requests.

- The containers access any necessary data storage.

- Kubernetes continuously monitors the health of the pods and automatically restarts or reschedules them if necessary.

- The Kubernetes control plane ensures the desired state of the cluster is maintained, automatically scaling the application up or down based on demand.

Best Practices for Optimizing Compute Resources in a Cloud Environment

Optimizing compute resources is crucial for minimizing costs and maximizing performance in a cloud environment. Employing the following best practices can significantly improve resource utilization and efficiency.

- Right-Sizing Instances: Choose instance types that align with the application’s resource requirements. Over-provisioning leads to wasted resources and increased costs, while under-provisioning can result in performance bottlenecks. Regularly monitor resource utilization and adjust instance sizes accordingly. For example, a company using VMs for web servers should monitor CPU and memory usage and scale the instances up or down based on traffic patterns.

- Auto-Scaling: Implement auto-scaling to automatically adjust the number of instances based on demand. This ensures that the application has sufficient resources during peak loads and reduces costs during periods of low activity. Configure auto-scaling rules based on metrics such as CPU utilization, memory usage, or network traffic.

- Resource Monitoring and Alerting: Implement comprehensive monitoring to track resource utilization, application performance, and error rates. Set up alerts to notify administrators of potential issues, such as high CPU usage or memory leaks. Utilize cloud provider-specific monitoring tools, such as Amazon CloudWatch or Azure Monitor, to gain detailed insights into resource consumption.

- Cost Optimization Tools: Leverage cost optimization tools provided by cloud providers to identify and address areas of waste. These tools can recommend instance size changes, identify idle resources, and suggest reserved instance purchases. For example, AWS Cost Explorer can help identify which EC2 instances are underutilized and suggest right-sizing recommendations.

- Containerization and Orchestration: Utilize containerization technologies like Docker and orchestration platforms like Kubernetes to improve resource utilization and simplify application deployments. Containers are lightweight and portable, enabling efficient use of resources. Kubernetes automates the deployment, scaling, and management of containerized applications, further optimizing resource utilization.

- Serverless Architecture: Consider serverless functions for event-driven tasks and APIs. Serverless computing eliminates the need to manage servers, leading to reduced operational overhead and cost savings. It automatically scales based on demand, ensuring efficient resource utilization. A good example is using AWS Lambda to process images uploaded to an S3 bucket, where the function scales automatically based on the number of images uploaded.

- Optimize Code and Application Design: Ensure that applications are optimized for performance and resource efficiency. This includes writing efficient code, using appropriate data structures, and minimizing network traffic. Regularly review and refactor code to identify and eliminate performance bottlenecks.

Storage Solutions

Choosing the appropriate storage solution is a critical aspect of cloud architecture design, directly impacting performance, scalability, and cost-effectiveness. The selection process requires a thorough understanding of the various storage options available, their respective use cases, and the factors influencing their suitability for specific workloads.

Storage Options

Cloud providers offer a diverse range of storage solutions, each optimized for different data types and access patterns. Understanding these options is fundamental to making informed architectural decisions.

- Object Storage: Object storage stores data as objects within a flat namespace, without a hierarchical directory structure. Objects consist of data, metadata, and a unique identifier. This approach provides high scalability, durability, and cost-effectiveness for unstructured data.

- Block Storage: Block storage presents data as raw, unformatted blocks, similar to a physical hard drive. It offers high performance and low latency, making it suitable for applications requiring direct access to storage at the block level.

- File Storage: File storage organizes data in a hierarchical file system, similar to a traditional file server. It supports protocols like NFS and SMB, enabling file sharing and collaboration among multiple users and applications.

Use Cases for Each Storage Type

The suitability of a storage solution depends heavily on the application’s requirements. Here are some examples illustrating the application of each storage type.

- Object Storage Use Cases: Object storage excels in scenarios involving large volumes of unstructured data.

- Data archiving and backup: Storing infrequently accessed data for long-term retention, such as historical records or disaster recovery backups. For example, Amazon S3 Glacier is designed for low-cost data archiving.

- Content delivery networks (CDNs): Distributing media files, software updates, and other static content globally, leveraging the scalability and availability of object storage.

- Big data analytics: Storing and processing large datasets for data warehousing and analytics platforms. Services like Amazon S3 are frequently used as a data lake.

- Block Storage Use Cases: Block storage is optimized for applications demanding high performance and low latency.

- Virtual machine (VM) storage: Providing storage volumes for virtual machines, allowing for fast boot times and efficient I/O operations.

- Database storage: Supporting databases that require high I/O throughput and consistent performance. Examples include relational databases and NoSQL databases.

- High-performance computing (HPC): Meeting the demanding storage requirements of scientific simulations, financial modeling, and other compute-intensive workloads.

- File Storage Use Cases: File storage is designed for scenarios requiring shared access and a hierarchical file system.

- Shared file systems: Enabling multiple users and applications to access and share files, such as in collaborative environments or content management systems.

- Network file shares: Providing centralized file storage for on-premises or cloud-based applications that need to access files over a network.

- Application development: Storing source code, configuration files, and other files needed for application development and deployment.

Factors to Consider When Selecting a Storage Solution

Selecting the optimal storage solution involves a comprehensive evaluation of various factors. These considerations directly influence the performance, cost, and overall efficiency of the cloud architecture.

- Performance: This involves assessing read and write speeds, latency, and I/O operations per second (IOPS). The required performance should align with the application’s demands. For example, databases often necessitate high IOPS.

- Cost: Evaluate the cost of storage per gigabyte, data transfer charges, and any associated operational costs. Different storage tiers (e.g., standard, infrequent access, archive) offer varying cost structures.

- Durability: Data durability ensures data integrity and availability in the event of failures. Cloud providers offer various durability levels, often expressed as a percentage of data loss probability. For instance, object storage often provides 99.999999999% (11 nines) durability.

- Scalability: The ability to scale storage capacity and performance as the application grows is critical. Choose a solution that can accommodate increasing data volumes and user demands without significant architectural changes.

- Availability: High availability is crucial for business-critical applications. Consider the storage solution’s availability SLAs and the redundancy mechanisms it provides.

- Data Access Patterns: Analyze how frequently data will be accessed, the access methods (e.g., read-heavy, write-heavy), and the geographic distribution of users. These patterns will influence the choice of storage type and access methods.

- Security: Implement robust security measures to protect data at rest and in transit. Consider encryption, access control policies, and compliance requirements.

- Data Lifecycle Management: Establish policies for managing data throughout its lifecycle, including archiving, deletion, and data tiering. This helps optimize storage costs and ensure compliance.

Networking and Connectivity: Establishing Cloud Infrastructure

Cloud networking is a critical foundation for any cloud architecture, dictating how resources communicate within the cloud and with external environments. A well-designed network ensures security, performance, and availability of cloud-based applications and services. This section details the essential components of cloud networking and the methods for establishing robust connectivity.

Key Components of Cloud Networking

Cloud networking fundamentally relies on several key components that control traffic flow, security, and resource isolation. These components work together to create a secure and efficient network environment.

- Virtual Private Clouds (VPCs): VPCs provide logically isolated sections of the cloud provider’s network. Each VPC functions as a private network, allowing users to define their own IP address ranges, subnets, and routing rules. This isolation is essential for security and controlling network traffic.

- Subnets: Subnets are subdivisions within a VPC, allowing for further segmentation of network resources. They are defined by IP address ranges and used to group resources based on function, security requirements, or geographic location. Subnets also define the scope of broadcast domains.

- Security Groups: Security groups act as virtual firewalls at the instance level, controlling inbound and outbound traffic based on defined rules. These rules specify which protocols, ports, and source/destination IP addresses are allowed or denied. Security groups are stateful, meaning that if an inbound request is allowed, the corresponding outbound response is also automatically permitted.

- Network Access Control Lists (NACLs): NACLs provide an additional layer of security at the subnet level, offering stateless filtering of traffic. Unlike security groups, NACLs evaluate traffic in both directions and can be used to block traffic based on IP address, protocol, and port. They provide a more granular level of control compared to security groups.

- Routing Tables: Routing tables define the paths that network traffic takes within a VPC. They map destination IP address ranges to specific targets, such as internet gateways, virtual private gateways, or other instances within the VPC. Proper routing is critical for ensuring that traffic reaches its intended destination.

- Internet Gateways: Internet gateways provide a connection between a VPC and the public internet. They enable instances within the VPC to access public resources and allow external users to access public-facing services hosted within the VPC.

- Virtual Private Gateways: Virtual private gateways enable connectivity between a VPC and an on-premises network or another VPC. They support VPN and Direct Connect connections, allowing secure and private communication between cloud and on-premises resources.

Multi-VPC Architecture with Peering

A multi-VPC architecture offers several advantages, including enhanced security, improved isolation, and better organization of resources. VPC peering allows for direct network connections between VPCs, enabling resources in different VPCs to communicate with each other as if they were within the same network.

Network Diagram Illustration:

Consider a multi-VPC architecture with two primary VPCs: “Web Applications VPC” and “Database VPC”.

Web Applications VPC:

- Contains a public subnet with a load balancer and web servers. These servers handle incoming user requests.

- Contains a private subnet with application servers that handle the business logic and interact with the database.

- An Internet Gateway is attached to the VPC to allow web servers to access the internet.

Database VPC:

- Contains a private subnet with database servers. These servers store and manage the application data.

- This VPC does not have direct internet access for enhanced security.

VPC Peering:

- A VPC peering connection is established between the “Web Applications VPC” and the “Database VPC.”

- This connection allows the application servers in the “Web Applications VPC” to communicate with the database servers in the “Database VPC” directly, without traversing the public internet.

Routing Configuration:

- Routing tables in each VPC are configured to direct traffic destined for the peered VPC’s IP address ranges through the peering connection.

Security Considerations:

- Security groups and NACLs are configured to control traffic flow between the subnets and VPCs, ensuring only authorized traffic is allowed.

Diagram Description: The diagram illustrates two rectangular boxes representing the two VPCs, with each box internally divided into subnets. Lines with arrows indicate the flow of traffic between the subnets and VPCs, showing how the application servers in the Web Applications VPC communicate with the database servers in the Database VPC via the peering connection. The Internet Gateway in the Web Applications VPC is also depicted, connecting to the public internet.

Methods for Connecting to the Cloud from On-Premises Environments

Connecting on-premises environments to the cloud is essential for hybrid cloud deployments, enabling access to cloud resources from existing infrastructure. Two primary methods are used to establish this connectivity: VPN and Direct Connect.

- Virtual Private Network (VPN): VPN connections create a secure, encrypted tunnel over the public internet. This method is cost-effective and relatively easy to set up. The VPN gateway on the on-premises side encrypts the traffic, and the cloud provider’s VPN gateway decrypts the traffic, enabling secure communication.

- Direct Connect: Direct Connect provides a dedicated network connection between the on-premises environment and the cloud provider. This connection bypasses the public internet, offering higher bandwidth, lower latency, and more consistent performance compared to VPN connections. Direct Connect is typically used for high-volume data transfers and applications that require low latency. The physical connection can be established through a third-party provider or directly with the cloud provider.

Comparison:

| Feature | VPN | Direct Connect |

|---|---|---|

| Cost | Lower | Higher |

| Latency | Higher | Lower |

| Bandwidth | Lower | Higher |

| Security | Secure (encrypted) | Highly Secure (dedicated connection) |

| Setup Complexity | Easier | More Complex |

| Use Cases | Hybrid cloud, infrequent data transfer | High-volume data transfer, low-latency applications |

Security Considerations: Protecting Your Cloud Environment

Securing a cloud environment is paramount for data integrity, business continuity, and regulatory compliance. Cloud security involves a multi-layered approach, encompassing various controls and practices to mitigate risks. Understanding the shared responsibility model and implementing security best practices are crucial for a robust and resilient cloud architecture.

Shared Responsibility Model in Cloud Computing

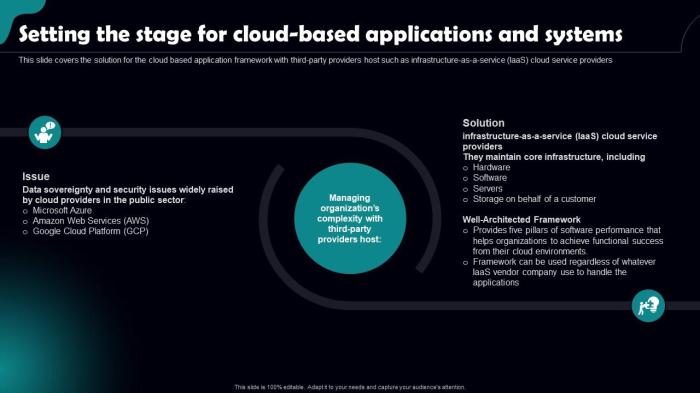

The shared responsibility model defines the security obligations of both the cloud provider and the customer. This model acknowledges that the cloud provider is responsible for securing the underlying infrastructure, while the customer is responsible for securing the data and applications they deploy in the cloud. The division of responsibilities varies depending on the cloud service model (IaaS, PaaS, or SaaS).In Infrastructure as a Service (IaaS), the cloud provider manages the physical infrastructure, including servers, storage, and networking.

The customer is responsible for securing the operating systems, applications, data, and access controls.In Platform as a Service (PaaS), the cloud provider manages the underlying infrastructure and provides a platform for developing and deploying applications. The customer is responsible for securing the applications and data they deploy on the platform.In Software as a Service (SaaS), the cloud provider manages the entire application and infrastructure.

The customer is primarily responsible for data security, user access, and configuration settings.The shared responsibility model can be summarized as follows:* Cloud Provider Responsibilities: Physical security, infrastructure security (hardware, network, virtualization), and security of the underlying services.

Customer Responsibilities

Data security, application security, identity and access management, operating system and configuration security, and incident response.

Security Best Practices for Cloud Architecture

Implementing security best practices is essential for protecting cloud environments. A proactive approach, encompassing various security controls, is necessary to mitigate potential threats.Here are some security best practices for cloud architecture:* Identity and Access Management (IAM): Implement strong IAM policies with least privilege access. Use multi-factor authentication (MFA) to verify user identities. Regularly review and audit access permissions.

Data Encryption

Encrypt data at rest and in transit. Utilize encryption keys managed by the cloud provider or your own key management system (KMS).

Network Security

Implement firewalls, intrusion detection and prevention systems (IDS/IPS), and virtual private networks (VPNs) to secure network traffic. Segment the network to isolate critical resources.

Vulnerability Management

Regularly scan for vulnerabilities and patch systems promptly. Implement automated vulnerability scanning tools.

Security Monitoring and Logging

Enable comprehensive logging and monitoring. Use security information and event management (SIEM) systems to analyze logs and detect security incidents.

Incident Response

Develop and test an incident response plan. Establish clear procedures for identifying, containing, and recovering from security incidents.

Compliance and Governance

Adhere to relevant regulatory compliance requirements (e.g., HIPAA, GDPR). Implement governance policies to ensure security controls are consistently applied.

Data Backup and Recovery

Implement a robust data backup and recovery strategy to protect against data loss. Regularly test data recovery procedures.

Security Training and Awareness

Provide security training to all users. Promote a security-conscious culture within the organization.

Regular Security Assessments

Conduct regular security assessments, including penetration testing and vulnerability assessments, to identify and address security weaknesses.

Security Services Offered by Cloud Providers

Cloud providers offer a wide range of security services to help customers protect their cloud environments. These services can be used to implement the security best practices.The following table details some security services offered by cloud providers:

| Service | Description | Benefits | Examples |

|---|---|---|---|

| Identity and Access Management (IAM) | Manages user identities, access permissions, and authentication mechanisms. | Enforces least privilege access, controls user access to resources, and simplifies user management. | AWS IAM, Azure Active Directory, Google Cloud IAM |

| Web Application Firewall (WAF) | Protects web applications from common web exploits, such as SQL injection and cross-site scripting (XSS). | Mitigates web application vulnerabilities, protects against DDoS attacks, and improves application security posture. | AWS WAF, Azure WAF, Google Cloud Armor |

| Encryption Services | Provides services for encrypting data at rest and in transit. Includes key management services (KMS). | Protects data confidentiality, meets compliance requirements, and allows for secure data storage. | AWS KMS, Azure Key Vault, Google Cloud KMS |

| Security Information and Event Management (SIEM) | Collects, analyzes, and correlates security logs and events from various sources. | Provides real-time security monitoring, detects security threats, and supports incident response. | AWS CloudWatch, Azure Sentinel, Google Cloud Security Command Center |

Data Management and Governance

Data management and governance are critical pillars of a successful cloud architecture. They ensure the reliability, security, and compliance of data assets within the cloud environment. Effective data governance minimizes risks, supports informed decision-making, and maximizes the value derived from data. Without robust data management and governance practices, organizations risk data breaches, non-compliance with regulations, and ultimately, a loss of trust and business value.

Importance of Data Governance in a Cloud Environment

Data governance in the cloud establishes policies, procedures, and controls for managing data throughout its lifecycle. This encompasses data creation, storage, usage, archiving, and disposal. It is essential for maintaining data integrity, security, and compliance with regulatory requirements.

- Data Integrity: Data governance ensures data accuracy, consistency, and completeness. This is crucial for reliable analytics, reporting, and decision-making. Without governance, data quality can degrade, leading to flawed insights and potentially incorrect business decisions.

- Data Security: Cloud environments introduce new security challenges. Data governance defines and enforces security policies, including access controls, encryption, and monitoring, to protect sensitive data from unauthorized access and breaches.

- Compliance: Data governance helps organizations meet regulatory requirements, such as GDPR, HIPAA, and CCPA. It ensures data is collected, stored, and processed in accordance with applicable laws and regulations, mitigating legal and financial risks.

- Data Accessibility: Effective governance promotes data accessibility for authorized users, facilitating data-driven insights and business agility. Proper governance includes clear data catalogs, metadata management, and data lineage tracking.

- Cost Optimization: By managing data storage and usage effectively, data governance helps control cloud costs. Strategies such as data archiving and lifecycle management can reduce unnecessary storage expenses.

Data Management Strategies

Data management strategies are implemented to protect data from loss, corruption, and unauthorized access. These strategies encompass a range of techniques to ensure data availability and resilience.

- Data Backup: Regularly backing up data is a fundamental component of data management. Cloud providers offer various backup solutions, including point-in-time recovery, snapshotting, and replication. The frequency and type of backup should align with the Recovery Point Objective (RPO) and Recovery Time Objective (RTO) requirements of the business. For instance, a critical database might require hourly backups with a short RTO, while archival data might use less frequent backups.

- Disaster Recovery (DR): Disaster recovery plans ensure business continuity in the event of a disruptive event, such as a natural disaster or system failure. DR strategies typically involve replicating data and applications to a secondary cloud region or a different cloud provider. This allows for rapid failover in case of an outage. Consider a financial institution that must maintain continuous operations. Their DR plan might involve real-time data replication to a geographically diverse region to ensure immediate failover in case of a disaster.

- Data Archiving: Data archiving involves moving older, less frequently accessed data to a lower-cost storage tier. This helps reduce storage costs while retaining data for compliance and historical analysis purposes. Data archiving can be implemented using cloud-native solutions like Amazon S3 Glacier or Azure Archive Storage.

- Data Retention Policies: Establishing data retention policies defines how long data should be stored, based on business and regulatory requirements. These policies should be clearly documented and enforced to avoid unnecessary data storage and potential legal liabilities.

- Data Lifecycle Management: Data lifecycle management automates the movement of data between different storage tiers based on its age, access frequency, and business value. This strategy optimizes storage costs and ensures data is stored in the most appropriate location.

Implementing Data Encryption

Data encryption is a critical security measure for protecting data confidentiality, both at rest and in transit.

- Encryption at Rest: Encryption at rest protects data stored in cloud storage services from unauthorized access. This can be implemented using:

- Server-Side Encryption (SSE): The cloud provider manages the encryption keys. Data is encrypted and decrypted by the provider. This is often the simplest method to implement.

- Client-Side Encryption: The data is encrypted by the client before it is uploaded to the cloud. The client manages the encryption keys, providing greater control over data security.

- Customer-Managed Keys (CMK): Allows organizations to manage their own encryption keys, providing greater control and security. This can be integrated with key management services offered by cloud providers.

- Encryption in Transit: Encryption in transit protects data as it moves between different locations, such as between a user’s device and the cloud, or between different cloud services.

- Transport Layer Security (TLS/SSL): TLS/SSL protocols are used to encrypt network traffic. This ensures that data transmitted over the internet is protected from eavesdropping and tampering.

- Virtual Private Networks (VPNs): VPNs create secure tunnels for transmitting data between a user’s device and the cloud. VPNs encrypt all traffic passing through the tunnel, ensuring data confidentiality.

- HTTPS: Using HTTPS for web applications ensures that all communication between the web browser and the server is encrypted. This protects sensitive information like passwords and credit card details.

Automation and Infrastructure as Code (IaC)

Automating cloud infrastructure deployment and management is crucial for achieving agility, scalability, and cost-effectiveness. Infrastructure as Code (IaC) is a key practice in this area, allowing infrastructure to be treated like software. This approach enables consistent, repeatable, and version-controlled infrastructure deployments, reducing manual errors and improving operational efficiency.

Benefits of Infrastructure as Code

IaC offers significant advantages in cloud architecture design and management. These benefits streamline operations, enhance security, and improve overall efficiency.

- Automation and Repeatability: IaC automates the provisioning and configuration of infrastructure resources. This ensures consistent deployments across environments (development, testing, production) and eliminates manual errors associated with human intervention. For instance, instead of manually configuring a virtual machine, IaC scripts can consistently deploy the same configuration every time.

- Version Control and Collaboration: IaC code is stored in version control systems (e.g., Git), allowing for tracking changes, reverting to previous configurations, and facilitating collaboration among teams. This ensures that infrastructure changes are documented, auditable, and easily rolled back if necessary.

- Improved Efficiency and Speed: IaC significantly reduces the time required to provision and configure infrastructure. Automated deployments are faster than manual processes, enabling faster release cycles and quicker responses to changing business needs. For example, a complex application environment that might take days to set up manually can be deployed in hours using IaC.

- Cost Optimization: IaC allows for the dynamic scaling of resources based on demand. This leads to optimized resource utilization and reduced infrastructure costs. By automatically scaling resources up or down based on traffic patterns, IaC helps avoid over-provisioning and associated costs.

- Enhanced Security: IaC promotes security best practices by allowing security configurations to be defined and enforced consistently across all environments. Security policies can be codified and version-controlled, ensuring that security measures are always applied during deployment.

Examples of IaC Tools

Several tools are available for implementing IaC, each with its strengths and weaknesses. The choice of tool depends on the specific cloud provider, complexity of the infrastructure, and team’s existing skills.

- Terraform: Terraform is a popular, open-source IaC tool developed by HashiCorp. It supports multiple cloud providers (AWS, Azure, Google Cloud, etc.) and allows for infrastructure to be defined in a declarative configuration language (HCL – HashiCorp Configuration Language). Terraform uses a state file to track the current state of the infrastructure and manages changes accordingly.

- CloudFormation: CloudFormation is an IaC service provided by Amazon Web Services (AWS). It allows users to define infrastructure resources using YAML or JSON templates. CloudFormation manages the creation, modification, and deletion of AWS resources based on these templates. It integrates directly with AWS services, making it well-suited for AWS-centric environments.

- Azure Resource Manager (ARM) Templates: ARM templates are the IaC solution for Microsoft Azure. They are JSON files that define the infrastructure resources and their configurations. ARM templates are deployed through the Azure portal, Azure CLI, or PowerShell, enabling the automated deployment of Azure resources.

- Google Cloud Deployment Manager: Google Cloud Deployment Manager is Google Cloud’s IaC tool. It uses YAML or Python templates to define and manage Google Cloud resources. Deployment Manager supports various resource types and allows for the creation of complex infrastructure deployments.

Code Snippet: Deploying a Simple Web Server with Terraform

The following code snippet demonstrates deploying a simple web server (using an EC2 instance) on AWS using Terraform. This example showcases the basic structure of a Terraform configuration.

terraform required_providers aws = source = "hashicorp/aws" version = "~> 5.0" provider "aws" region = "us-east-1" # Replace with your desired regionresource "aws_instance" "example" ami = "ami-0c55b90c53174160c" # Replace with a valid AMI for your region instance_type = "t2.micro" tags = Name = "Terraform-Webserver" output "public_ip" value = aws_instance.example.public_ipExplanation:

This Terraform configuration defines an AWS infrastructure. The terraform block specifies the required providers (AWS in this case) and their versions. The provider "aws" block configures the AWS provider, including the region to deploy resources. The resource "aws_instance" "example" block defines an EC2 instance. It specifies the AMI (Amazon Machine Image), instance type, and tags for the instance.

The output "public_ip" block outputs the public IP address of the created instance. To deploy this configuration, you would run terraform init, terraform plan, and terraform apply.

Monitoring and Logging: Ensuring Observability

Monitoring and logging are critical pillars of a well-architected cloud environment, enabling proactive identification of issues, performance optimization, and informed decision-making. They provide the necessary visibility into the system’s behavior, facilitating effective troubleshooting, security incident response, and cost management. Without robust monitoring and logging, cloud deployments become opaque, making it difficult to understand system performance, identify bottlenecks, and maintain the overall health of the infrastructure.

Importance of Monitoring and Logging in a Cloud Environment

Cloud environments are inherently dynamic and complex. The ability to monitor and log is essential for managing this complexity and ensuring the availability, performance, and security of cloud resources.

- Performance Management: Monitoring key metrics like CPU utilization, memory usage, network latency, and disk I/O allows for identifying performance bottlenecks and optimizing resource allocation. Logging provides detailed insights into application behavior, helping pinpoint slow queries, inefficient code, or other performance-related issues.

- Incident Response: Real-time monitoring and logging enable rapid detection and diagnosis of incidents. Alerts triggered by anomalies, such as increased error rates or unusual traffic patterns, allow for immediate investigation and remediation, minimizing downtime and impact.

- Security and Compliance: Comprehensive logging provides an audit trail of all activities within the cloud environment, which is crucial for security investigations, compliance audits, and detecting malicious activities. Monitoring can also be configured to detect and alert on suspicious behavior, such as unauthorized access attempts or data exfiltration.

- Cost Optimization: Monitoring resource usage and identifying underutilized resources can help optimize cloud spending. Logging provides insights into application behavior, allowing for the identification of areas where cost-saving measures can be implemented, such as optimizing database queries or scaling resources more efficiently.

- Capacity Planning: Analyzing historical monitoring data and trends helps predict future resource needs, enabling proactive capacity planning. This ensures that the cloud environment has sufficient resources to meet demand, preventing performance degradation and ensuring a positive user experience.

Examples of Monitoring Tools and Their Functionalities

A wide range of tools are available for monitoring and logging in cloud environments, each offering different capabilities and features. The choice of tools depends on the specific cloud provider, the complexity of the infrastructure, and the monitoring requirements.

- CloudWatch (AWS): AWS CloudWatch is a comprehensive monitoring service that provides real-time monitoring, logging, and alerting for AWS resources. It collects metrics from various services, such as EC2, S3, and RDS, and allows users to create dashboards, set alarms, and analyze logs.

- Azure Monitor (Azure): Azure Monitor provides a centralized monitoring solution for Azure resources, including virtual machines, storage accounts, and databases. It collects metrics, logs, and application insights, enabling users to visualize data, set alerts, and troubleshoot issues.

- Google Cloud Monitoring (GCP): Google Cloud Monitoring, formerly Stackdriver, is a monitoring service that provides visibility into the performance, availability, and health of applications running on Google Cloud. It collects metrics, logs, and traces, and offers features such as dashboards, alerting, and log analysis.

- Prometheus: Prometheus is an open-source monitoring and alerting toolkit that is widely used for monitoring containerized applications and microservices. It collects metrics from various sources, stores them in a time-series database, and provides a query language for analysis and visualization.

- Grafana: Grafana is an open-source data visualization and dashboarding tool that can be used to visualize metrics from various sources, including Prometheus, CloudWatch, and Azure Monitor. It allows users to create custom dashboards and share them with others.

- Elasticsearch, Logstash, and Kibana (ELK Stack): The ELK stack is a popular open-source log management solution that allows users to collect, process, and analyze logs from various sources. Elasticsearch is a search and analytics engine, Logstash is a data processing pipeline, and Kibana is a visualization and dashboarding tool.

Setting up Logging and Alerting for a Specific Cloud Service

The following blockquote illustrates how to set up logging and alerting for a hypothetical web application running on an AWS EC2 instance.

1. Configure Logging:

- Install and configure a logging agent (e.g., Fluentd, CloudWatch Logs agent) on the EC2 instance.

- Configure the logging agent to collect application logs (e.g., from application server logs, access logs) and send them to CloudWatch Logs.

- Define log groups in CloudWatch Logs to organize logs based on application, environment, or other criteria.

2. Create Metrics from Logs:

- Use CloudWatch Logs Insights to query and analyze the logs.

- Create metric filters to extract specific data points from the logs (e.g., error counts, request latencies).

- Publish these extracted data points as custom metrics in CloudWatch.

3. Set up Alerts:

- Create CloudWatch alarms based on the custom metrics.

- Configure the alarms to trigger notifications (e.g., email, SMS, or integration with incident management systems) when specific thresholds are exceeded (e.g., error rate above a certain level, average request latency above a certain threshold).

- Configure alarm actions to automatically take corrective actions (e.g., scaling up the EC2 instance, triggering an automated remediation script).

4. Monitor and Iterate:

- Regularly review the logs, metrics, and alerts to identify areas for improvement.

- Adjust the logging configuration, metric filters, and alarm thresholds as needed to optimize monitoring and alerting.

Cost Optimization: Managing Cloud Spending

Optimizing cloud costs is crucial for maximizing the return on investment (ROI) of cloud deployments. It involves a multifaceted approach that considers various factors, from resource allocation to pricing models and monitoring. Effective cost management ensures that cloud resources are utilized efficiently, preventing unnecessary expenses and aligning cloud spending with business objectives.

Strategies for Optimizing Cloud Costs

A strategic approach to cost optimization involves several key areas. Implementing these strategies can significantly reduce cloud expenditure while maintaining performance and scalability.

- Right-Sizing: Right-sizing involves matching the compute resources (CPU, memory, storage) to the actual workload requirements. Over-provisioning leads to wasted resources and higher costs. Under-provisioning can lead to performance issues. Regularly monitoring resource utilization metrics (CPU utilization, memory usage, disk I/O) and adjusting instance sizes accordingly is essential. For example, a web server that consistently uses only 20% of its CPU capacity can be downsized to a smaller instance type.

- Reserved Instances/Committed Use Discounts: Cloud providers offer significant discounts for committing to use resources for a specific duration (typically one or three years). Reserved Instances (RIs) or Committed Use Discounts (CUDs) are most effective for workloads with predictable resource needs. By purchasing RIs or committing to CUDs, organizations can reduce their costs by up to 70% compared to on-demand pricing. For instance, a database server that is consistently running 24/7 can be a prime candidate for a reserved instance.

- Spot Instances/Preemptible VMs: Spot Instances (AWS) or Preemptible VMs (Google Cloud) offer significantly lower prices than on-demand instances, but they can be terminated by the cloud provider if the current spot price exceeds the user’s bid or if the provider needs the capacity. They are suitable for fault-tolerant workloads that can withstand interruptions, such as batch processing, data analysis, or development/testing environments.

The fluctuating prices necessitate careful monitoring and management.

- Scheduled Scaling: Automating the scaling of resources based on predictable demand patterns can optimize costs. For example, scaling down resources during off-peak hours (nights, weekends) and scaling up during peak hours. This prevents unnecessary spending on idle resources.

- Storage Tiering: Utilizing different storage tiers based on data access frequency and performance requirements can reduce storage costs. Frequently accessed data should be stored on faster, more expensive tiers (e.g., SSD), while less frequently accessed data can be stored on cheaper tiers (e.g., cold storage).

- Eliminating Idle Resources: Identifying and eliminating idle resources is crucial. This includes unused instances, unattached storage volumes, and orphaned resources. Regularly review the cloud environment to identify and terminate these resources.

- Choosing the Right Region: Pricing can vary between different cloud regions. Select the region that offers the most cost-effective pricing for your specific needs, considering factors such as data transfer costs and network latency.

- Monitoring and Alerting: Implement robust monitoring and alerting to track resource utilization, identify cost anomalies, and receive notifications when spending exceeds predefined thresholds.

Pricing Model Comparison

Cloud providers offer various pricing models for their services. Understanding these models is crucial for making informed decisions and optimizing costs. The following table compares pricing models for common cloud services.

| Service | Pricing Model | Description | Use Case |

|---|---|---|---|

| Compute (e.g., EC2, Compute Engine) | On-Demand | Pay for compute resources by the hour or second, with no upfront commitment. | Ideal for unpredictable workloads, development, and testing. |

| Compute (e.g., EC2, Compute Engine) | Reserved Instances/Committed Use Discounts | Commit to using compute resources for a specific duration (1 or 3 years) for a discounted price. | Suitable for steady-state workloads with predictable resource needs. |

| Compute (e.g., EC2, Compute Engine) | Spot Instances/Preemptible VMs | Bid on unused compute capacity at a discounted price, with the possibility of interruption. | Best for fault-tolerant workloads, batch processing, and non-critical tasks. |

| Storage (e.g., S3, Cloud Storage) | Pay-as-you-go (per GB) | Pay for the amount of storage used, with charges based on storage class (e.g., standard, infrequent access, cold storage). | For all storage needs, with different tiers based on access frequency and performance requirements. |

| Database (e.g., RDS, Cloud SQL) | Pay-as-you-go (per hour) + Storage + Network | Pay for database instances by the hour, plus storage and network usage. Options for reserved instances are also available. | For running databases, with options for different instance types and storage configurations. |

Using Cost Management Tools

Cost management tools are essential for tracking, analyzing, and optimizing cloud spending. These tools provide insights into resource consumption, cost trends, and potential areas for optimization. They offer functionalities to generate reports, set budgets, and receive alerts.

- Cloud Provider Native Tools: Each major cloud provider offers native cost management tools (e.g., AWS Cost Explorer, Google Cloud Cost Management, Azure Cost Management). These tools provide detailed cost breakdowns, resource utilization analysis, and budgeting capabilities.

- Cost Tracking and Reporting: Cost management tools allow users to track spending across different services, projects, and departments. Reports can be customized to show cost trends over time, identify cost drivers, and highlight areas for optimization.

- Budgeting and Alerts: Setting budgets and alerts is a proactive way to manage cloud spending. Users can define spending thresholds and receive notifications when spending exceeds those thresholds, preventing unexpected costs.

- Cost Allocation and Tagging: Tagging resources with relevant metadata (e.g., project, department, environment) allows for cost allocation and helps identify the cost of individual projects or services. This enables better cost control and accountability.

- Anomaly Detection: Some cost management tools use machine learning to detect cost anomalies, such as unexpected spikes in spending. This helps identify potential issues early and take corrective action.

- Recommendations: Many cost management tools provide recommendations for cost optimization, such as right-sizing instances, purchasing reserved instances, or optimizing storage usage. These recommendations are based on the analysis of resource utilization and cost data.

High Availability and Disaster Recovery: Ensuring Business Continuity

The ability to maintain business operations, even in the face of unforeseen disruptions, is paramount in cloud environments. High Availability (HA) and Disaster Recovery (DR) are crucial components of a robust cloud architecture, designed to minimize downtime and data loss. These strategies are not interchangeable; HA focuses on preventing service interruptions within a single region, while DR addresses recovery from catastrophic events that impact an entire region.

Concepts of High Availability and Disaster Recovery

High Availability aims to ensure continuous operation of critical applications and services. It focuses on redundancy and fault tolerance within a single geographic region. Disaster Recovery, on the other hand, focuses on the ability to restore operations after a significant disruptive event, such as a natural disaster or widespread infrastructure failure.

- High Availability (HA): HA systems employ redundancy to eliminate single points of failure. This can involve:

- Redundant servers: Multiple servers running the same application, configured to automatically take over if one fails.

- Load balancing: Distributing traffic across multiple servers to prevent overload and ensure consistent performance.

- Database replication: Maintaining multiple copies of the database in different availability zones or regions to ensure data availability.

- Disaster Recovery (DR): DR strategies involve planning for and recovering from catastrophic events that render an entire region unusable. Key components include:

- Replication to a secondary region: Copying data and applications to a geographically separate region.

- Failover mechanisms: Automatically switching to the secondary region in case of a primary region outage.

- Recovery Time Objective (RTO): The maximum acceptable time to restore service after a disaster.

- Recovery Point Objective (RPO): The maximum acceptable data loss in the event of a disaster.

Multi-Region Deployment Diagram for Disaster Recovery

A multi-region deployment is a common strategy for implementing DR. This approach involves deploying applications and data across multiple geographic regions, allowing for failover to a backup region if the primary region becomes unavailable.The following diagram illustrates a basic multi-region deployment:

Diagram Description: The diagram depicts a multi-region deployment for disaster recovery. Two distinct geographic regions, labeled “Region A” (Primary) and “Region B” (Secondary/DR), are shown. Each region contains a similar infrastructure setup, including:

- Compute Instances: Represented by server icons, these host the application workloads. Region A has active compute instances, while Region B has compute instances that are typically in a standby or passive mode, ready to be activated in case of a failover.

- Load Balancers: Shown as a box with an arrow pointing to the compute instances, the load balancer distributes traffic across the compute instances in each region. In Region A, the load balancer is active, handling incoming user requests. In Region B, the load balancer is typically configured to be inactive or to direct traffic only for health checks until a failover is triggered.

- Database Servers: Represented by database icons, these store the application’s data. Data replication occurs between the database servers in Region A and Region B. This replication ensures that data is continuously synchronized, minimizing data loss in the event of a disaster. The replication can be asynchronous or synchronous, depending on the RPO requirements.

- Connectivity: Arrows indicate the flow of traffic between the user and the regions. The user’s traffic primarily flows to Region A. A dotted arrow represents the failover mechanism, showing how traffic can be redirected to Region B if Region A becomes unavailable.

- DNS: The Domain Name System (DNS) is also involved. The DNS records are updated to point to Region B’s load balancer upon failover, allowing users to access the application in the secondary region.

This setup ensures that if Region A experiences an outage, traffic can be automatically redirected to Region B, minimizing downtime and data loss. The degree of data synchronization (synchronous or asynchronous replication) and the failover mechanism (manual or automated) are determined by the Recovery Time Objective (RTO) and Recovery Point Objective (RPO) requirements.

Procedures for Implementing Failover and Failback Mechanisms

Implementing effective failover and failback mechanisms is critical for a successful DR strategy. These procedures ensure a smooth transition to a secondary region during an outage and a return to the primary region once it’s operational again.

- Failover Mechanisms: Failover involves automatically or manually switching to the secondary region when the primary region becomes unavailable.

- Automated Failover: This involves using monitoring tools and automated scripts to detect failures and trigger the failover process. Examples include:

- Health checks: Regularly monitoring the health of the application and infrastructure components in the primary region.

- Automated DNS updates: Automatically updating DNS records to point to the secondary region’s resources.

- Scripted resource provisioning: Automating the startup of resources in the secondary region.

- Manual Failover: This requires human intervention to initiate the failover process. This approach is typically used when automation is not feasible or when more control is needed. The steps involved include:

- Verifying the outage: Confirming that the primary region is indeed unavailable.

- Initiating the failover process: Manually updating DNS records, activating resources in the secondary region, and reconfiguring load balancers.

- Failback Mechanisms: Failback involves returning to the primary region once it’s operational again.

- Planned Failback: This occurs when the primary region is restored to its normal operating state. The steps include:

- Verifying the primary region’s health: Ensuring that all components are functioning correctly.

- Synchronizing data: Ensuring that any data changes made in the secondary region are replicated back to the primary region.

- Updating DNS records: Directing traffic back to the primary region.

- Unplanned Failback: This occurs if the secondary region experiences an outage while the primary region is still unavailable. In this case, recovery might involve restoring the primary region or failing over to another region. The steps are similar to the failover process.

- Testing and Validation: Regular testing of failover and failback procedures is essential to ensure they function as expected.

- Failover Drills: Simulating outages to test the failover process.

- Failback Drills: Testing the failback process to ensure a smooth return to the primary region.

- Documentation: Maintaining comprehensive documentation of all failover and failback procedures.

Final Review

In conclusion, designing a target cloud architecture demands a holistic approach, encompassing strategic planning, meticulous execution, and continuous optimization. By carefully considering business goals, technical requirements, and provider capabilities, organizations can construct a robust and scalable cloud infrastructure. The discussed strategies, from compute layer selection to cost management, are essential for maximizing cloud benefits and ensuring business continuity. The journey toward cloud maturity is ongoing, necessitating constant adaptation and a commitment to best practices.

Essential FAQs

What is the primary difference between Infrastructure as Code (IaC) and traditional infrastructure management?

IaC treats infrastructure like software, enabling version control, automation, and repeatability, unlike traditional manual configuration, which is prone to errors and lacks scalability.

How does a multi-VPC architecture enhance security?

A multi-VPC architecture isolates resources, creating security boundaries that limit the blast radius of potential security breaches and enable granular access control.

What are the key considerations when selecting a disaster recovery strategy?

Key considerations include Recovery Time Objective (RTO), Recovery Point Objective (RPO), cost, and the complexity of implementation. The chosen strategy must align with the business’s tolerance for downtime and data loss.